In-Network Techniques for Highly Reliable Datacenter Networks

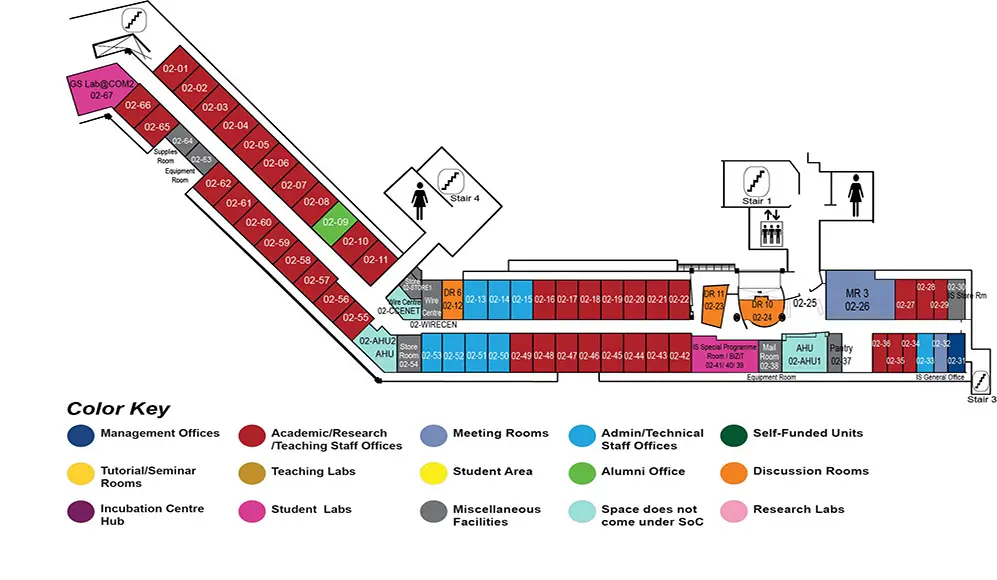

COM2 Level 2

MR3, COM2-02-26

Abstract:

Datacenters power today's large-scale Internet services such as web search, video streaming, e-commerce, and social networks for billions of users around the world. Within the datacenters, these large-scale services are realized through distributed applications running on thousands of servers that are connected by the datacenter network. The data transfers (called flows) between these distributed applications need to complete as quickly as possible because the flow completion time (FCT) directly impacts user experience, and thus revenue. Therefore, datacenter networks have stringent service-level requirements (SLAs) to guarantee that the worst-case (tail) FCTs have a tight bound. Bounding the tail FCTs in the datacenter network environment is challenging as network congestion events can cause an arbitrary increase in FCTs and affect the SLAs. Further, link failures, which are a norm in datacenter networks, cause packet loss which also increases FCTs several-fold. In this thesis, we propose three in-network techniques to mitigate the increase in tail FCTs in the face of microbursts and link failures.

First, we propose BurstRadar to detect and characterize microbursts occurring in the network. Microbursts are short periods of transient congestion events that occur unpredictably and cause an increase in tail FCTs. BurstRadar's key insight is that microbursts are localized to a switch's egress port queue and therefore can be monitored efficiently in the switch dataplane by capturing the telemetry information for only the packets involved in microbursts. Our evaluation on a multi-gigabit testbed shows that BurstRadar incurs 10 times less data collection and processing overhead than existing solutions.

Besides microbursts, when links fail either completely (fail-stop failure) or partially (gray failure), it causes packet drops which increase the tail FCT by several-fold. Existing techniques for managing fail-stop link failures cannot completely eliminate packet loss during such failures. To this end, we propose Shared Queue Ring (SQR), an on-switch mechanism that completely eliminates packet loss during fail-stop link failures by diverting the affected flows seamlessly to alternative paths. Our evaluation on a hardware testbed shows that SQR can completely mask link failures and reduce tail FCT by up to 4 orders of magnitude for latency-sensitive flows.

When links fail partially (gray failure), random packet drops occur due to bit corruption and such packet loss is significant in datacenter networks. Previous attempts to mitigate packet corruption loss seek to avoid faulty links at the cost of reduced link capacities and disruption to the rest of the network. In this thesis, we investigate the feasibility and tradeoffs of the classical loss recovery strategy of link-local retransmissions in the context of datacenter networks. We present the design and implementation of LinkGuardian, a dataplane-based protocol that detects the packets lost due to corruption and retransmits them while preserving packet ordering. Our results show that for a 100G link with a loss rate of 0.1%, LinkGuardian can reduce the loss rate by up to 6 orders of magnitude while incurring only 8% reduction in the link's effective link speed. By detecting and eliminating tail packet losses, and avoiding timeouts, LinkGuardian improves the 99.9th percentile FCT for TCP and RDMA by 51x and 66x respectively.

In summary, in this thesis, we demonstrate that it is possible for datacenter networks to perform reliably in view of transient congestion events as well as link failures using in-network techniques that leverage dataplane-programmable switches.