Supporting Generic Clustering And Similarity Search For Massive Datasets

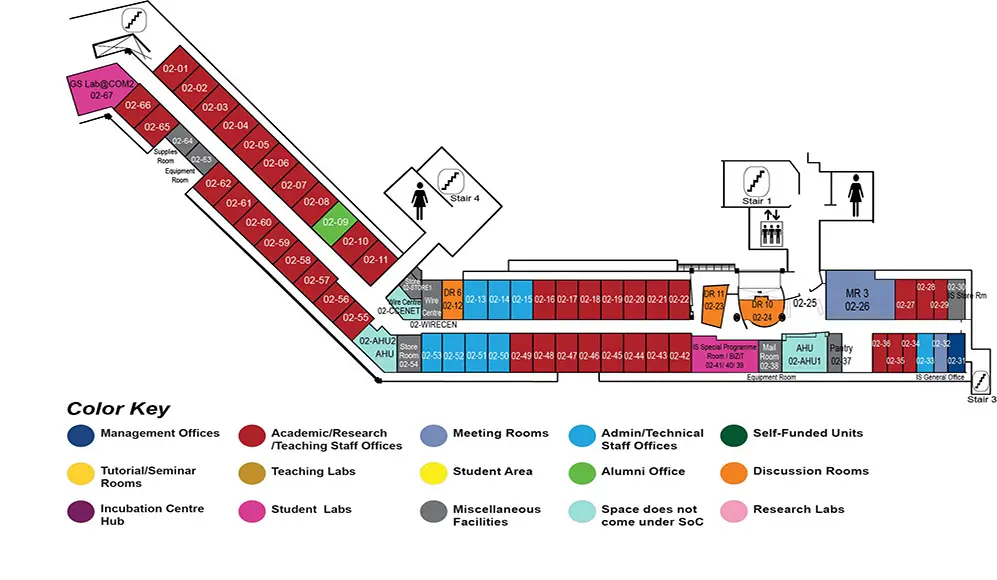

COM2 Level 2

MR3, COM2-02-26

Abstract:

Clustering and Similarity Search (or Nearest Neighbor Search, NNS) are two fundamental yet vital problems in big data analytics. Both of them have wide applications in many fields, including machine learning, pattern recognition, information retrieval, and bioinformatics. Big data, in its nature, has very large cardinality and dimensionality, and it usually consists of various types of data. These characteristics require the analytic methods to be efficient and versatile for various distance functions.

However, most existing clustering methods for large scale data are customized for a specific distance/similarity function and too little work has been devoted to tackle the generic functionality to simultaneous support many distances. Moreover, there exist NNS methods like k-Nearest Neighbor Graphs (k-NNG) that can support generic distance measure, but they usually cannot handle large scale data. In this thesis, we explore the generic solutions for the clustering and NNS problems on massive datasets.

We first propose a Generic distributEd clustEring frameworK (GEEK) to process massive amounts of data with different distance functions. Different from the traditional perspective that adopt Locality-Sensitive Hashing (LSH) techniques in clustering to speed up distance calculation, we consider the connections between buckets in LSH instead of only the objects in a single bucket. To deal with different data types, GEEK first converts data in the original feature space into a unified format of buckets; then, we design a new Seeding method based on simILar bucKets (SILK) to determine initial seeds. With these well-selected initial seeds, GEEK only needs a one-pass data assignment to get the final clusters. By harvesting the computational power of distributed environment and modern hardware like GPUs, GEEK can support clustering over billion-scale datasets. Evaluations over five large-scale real-life datasets demonstrate the superior performance of GEEK. To improve the performance of GEEK on sparse data, we further investigate the clustering problem on sparse data sets with enormous cardinality and ultra-high dimensionality.

We propose a new partitioning-based method called $k$-FreqItems with a new sparse center representation called FreqItem which chooses a set of high-frequency, non-zero dimensions to represent the cluster center, making clustering results easily interpretable. Unlike most existing clustering algorithms which adopt Euclidean distance as the similarity measure, $k$-FreqItems uses the popular Jaccard distance for comparing sets.

Moreover, since the efficiency and effectiveness of $k$-FreqItems are highly dependent on an initial set of representative seeds, we introduce SILK$+$ to deal with the seeding problem of $k$-FreqItems. SILK$+$ uses LSH functions for oversampling and identifies frequently co-occurred data in LSH buckets to determine a set of promising seeds, allowing $k$-FreqItems to converge swiftly in an iterative process. Experimental results over seven real-world sparse data sets show that the $k$-FreqItems with SILK$+$ is efficient yet effective. %, especially when $k$ increases.

Finally, with the support of GEEK, we incorporate a generic similarity search framework GENIE with a two-level distributed indexing structure and propose $d$GENIE to scale up to billion-scale data. In this regard, we make three attractive designs beyond GENIE: (1) We design a LSH scheme named C2LSH$^+$ for efficient cluster indexing and search with a theoretical guarantee. (2) We propose a new measure, collision score, to evaluate the closeness between query objects and clusters and further design a cost model to assign different workloads for different queries. (3) We exploit the parallelism with multiple computing nodes and GPUs and implement $d$GENIE on a distributed CPU-GPU platform for massive data. Extensive experiments over four large-scale real-life data sets demonstrate the efficiency and effectiveness of $d$GENIE and its generic nature.

Finally, we conclude this thesis and show several possible directions of improvement as our future works.