Deep Neural Networks for Relation Extraction

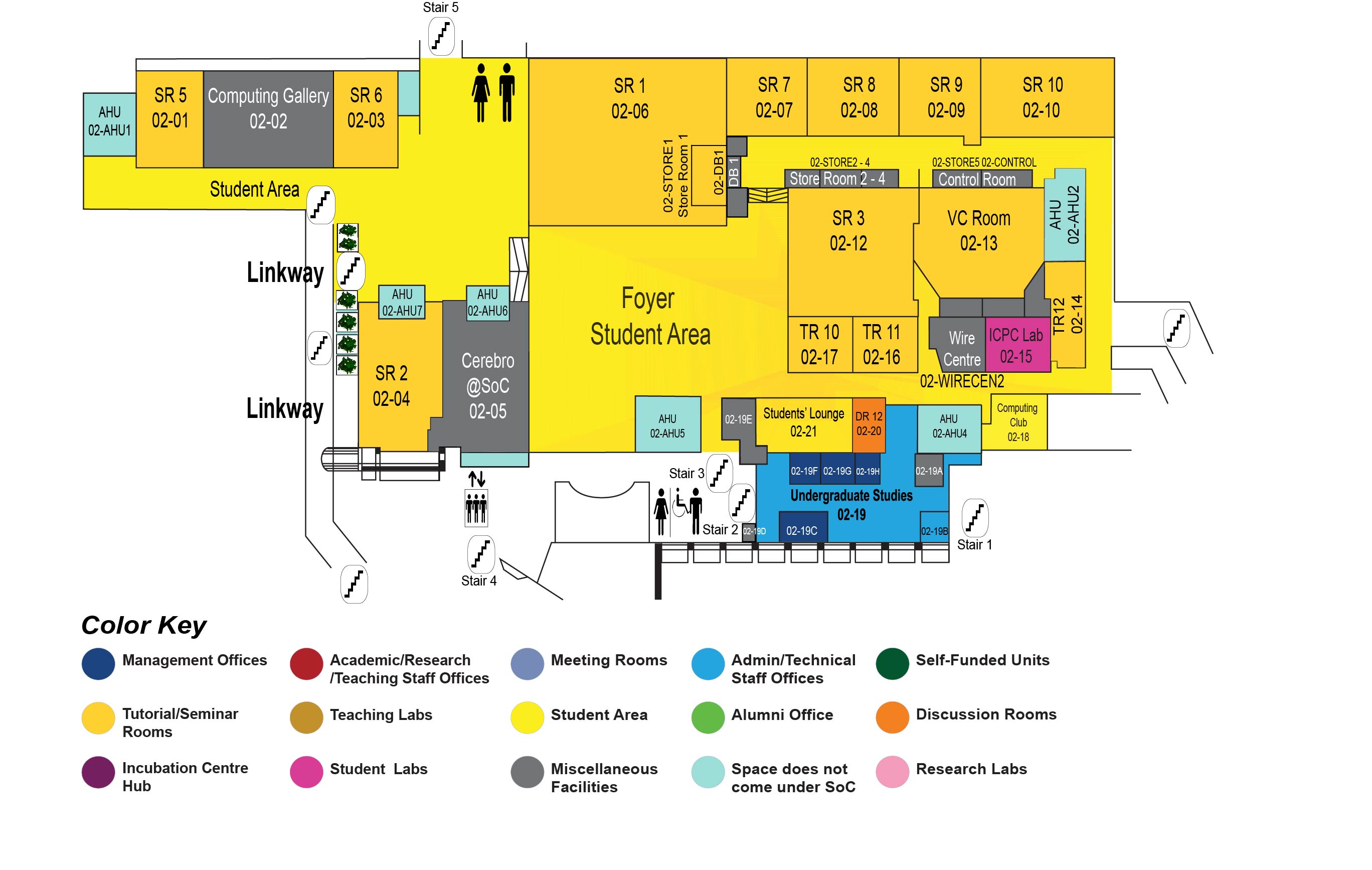

COM1 Level 2

SR2, COM1-02-04

Abstract:

The Web is a source of huge amounts of unstructured texts, containing much structured information. The task of relation extraction is to extract relation tuples, each consisting of two entities and a relation between them, from free texts. In this thesis proposal, I will present how we tackle this task using novel deep neural network models.

First, we use a pipeline approach for this task. We assume that the entities have already been identified by an external named entity recognition system. Then, we propose a syntax-focused multi-factor attention model to determine the relation (if any) between two entities. We use the syntactic distance of words from the entities to determine their importance in establishing the relation between the two given entities. We also use multi-factor attention to focus on multiple pieces of evidence present in a text to support the relation. Our proposed model achieves significant improvements over prior work on widely used relation extraction datasets.

Second, we tackle the task of joint entity and relation extraction, where entities are not identified beforehand. There may be multiple relation tuples present in a sentence, and these relations may share one or both entities among them. Extracting such relation tuples from a sentence is a difficult task and sharing of entities or overlapping entities among the tuples makes it more challenging. We propose two approaches to use encoder-decoder networks for the joint extraction of entities and relations. In the first approach, we propose a representation scheme for relation tuples that enables the decoder to generate one token at a time (like machine translation models) and still extract all the tuples present in a sentence, with full entity names of different lengths and with overlapping entities. Next, we propose a pointer network-based decoding approach where an entire tuple is generated at every time step. Our proposed models outperform prior work on widely used relation extraction datasets.

Finally, we propose to extend our work to multi-hop relation extraction. Most relation extraction research focuses on sentence-level relation extraction, where two entities of a relation tuple must appear in the same sentence. This assumption is overly strict, and for many relations, we may not find both entities in the same sentence. We plan to work on a multi-hop relation extraction task to address this problem. In multi-hop relation extraction, two entities of a relation tuple may appear in two different documents, but these two documents are connected through other documents and common entities. We can find a chain of links starting from the start entity in the start document to the end entity in the end document, via the common entities in the connecting documents. We can find the relation of each link in this chain, and finally, find the relation between the start and end entity. This multi-hop approach can help to find facts about more relations compared to sentence-level extraction.