PaperRobot: Automated Scientific Knowledge Graph Construction and Paper Writing

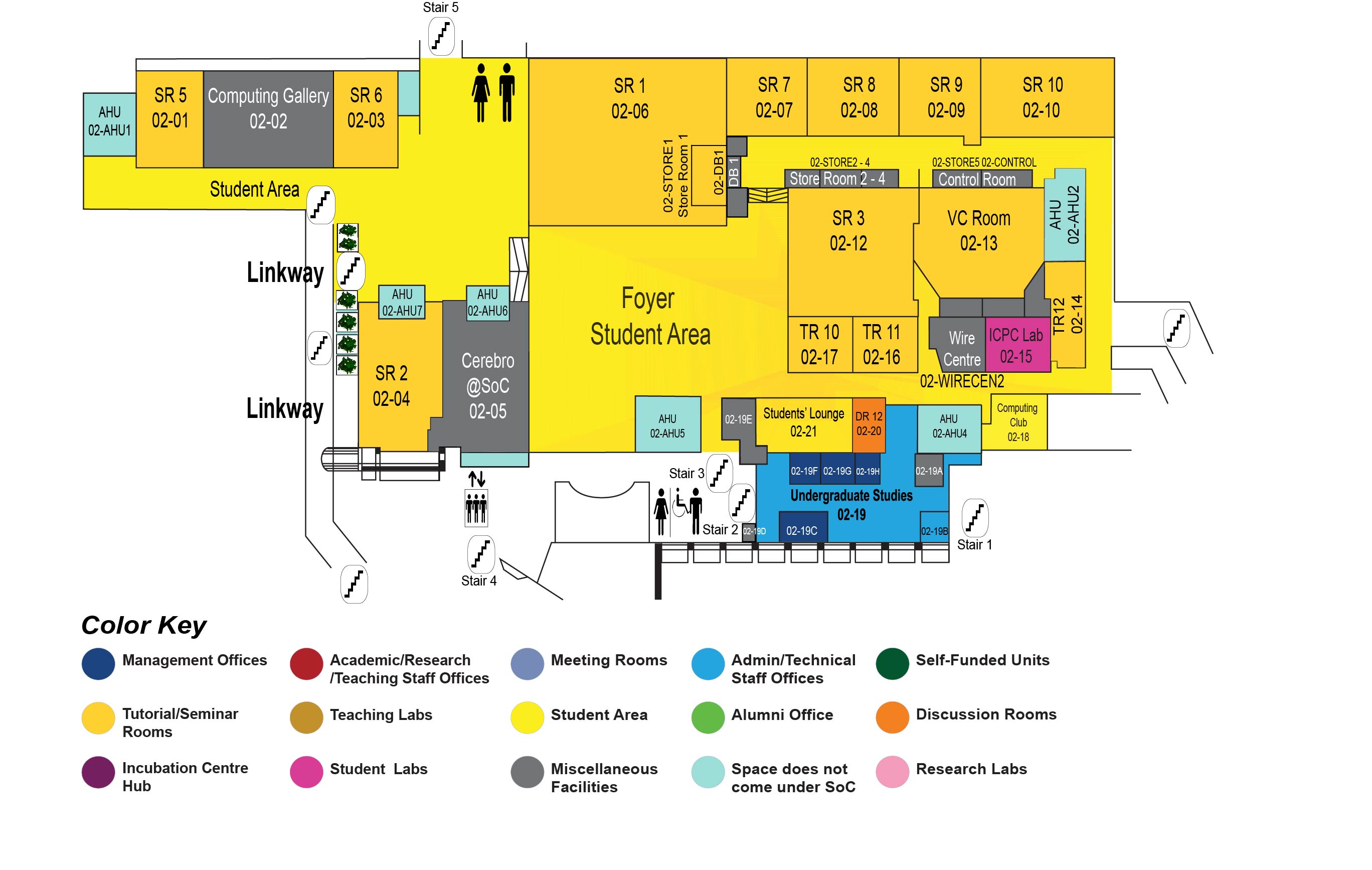

COM1 Level 2

SR2, COM1-02-04

Abstract:

The ambitious goal of this work is to speed up scientific discovery and production by building a PaperRobot, who addresses three main tasks as follows. The first task is to read existing Papers. Scientists now find it difficult to keep up with the overwhelming amount of papers, e.g., more than 500K biomedical papers are published every year, but scientists read, on average, only 264 papers per year (1 out of 5000 available papers). PaperRobot automatically reads existing papers to build background knowledge graphs (KGs) based on entity and relation extraction. Constructing knowledge graphs from scientific literature is generally more challenging than that in the general news domain since it requires broader acquisition of domain-specific knowledge and deeper understanding of complex contexts. To better encode contextual information and external background knowledge, we propose a novel knowledge base (KB)-driven tree-structured long short-term memory networks (Tree-LSTM) framework, and a graph convolutional networks model, incorporating two new types of features: (1) dependency structures to capture wide contexts; (2) entity properties (types and category descriptions) from external ontologies via entity linking. The second task is to automatically create new ideas. Foster et al. (2015) shows that more than 60% of 6.4 million papers in biomedicine and chemistry are about incremental work. This inspires us to automate the incremental creation of new ideas by predicting new links in background KGs, based on a new entity representation that combines KG structure and unstructured contextual text. Finally we move on to the final ambitious and fun task to write a new paper about new ideas. The goal of this final step is to communicate the new ideas to the reader clearly, which is a very difficult thing to do; many scientists are, in fact, bad writers (Pinker, 2014). Using a novel memory-attention network architecture, PaperRobot automatically writes a new paper abstract about an input title along with predicted related entities, then further writes conclusion and future work based on the abstract, and finally predicts a new title for a future follow-on paper. We choose biomedical science as our target do-main due to the sheer volume of available papers. Turing tests show that PaperRobot-generated output strings are sometimes chosen over human-written ones; and most paper abstracts only require minimal edits from domain experts to become highly informative and coherent.

Biodata:

Heng Ji is a professor at Computer Science Department of University of Illinois at Urbana-Champaign. She received her B.A. and M. A. in Computational Linguistics from Tsinghua University, and her M.S. and Ph.D. in Computer Science from New York University. Her research interests focus on Natural Language Processing, especially on Information Extraction and Knowledge Base Population. She is selected as "Young Scientist" and a member of the Global Future Council on the Future of Computing by the World Economic Forum in 2016 and 2017. The awards she received include "AI's 10 to Watch" Award by IEEE Intelligent Systems in 2013 and NSF CAREER award in 2009. She has coordinated the NIST TAC Knowledge Base Population task since 2010. She is the associate editor for IEEE/ACM Transaction on Audio, Speech, and Language Processing, and served as the Program Committee Co-Chair of many conferences including NAACL-HLT2018.