Adversarial Computer Vision at Facebook

Research Manager, Facebook AI (Computer Vision, New York and Cambridge offices)

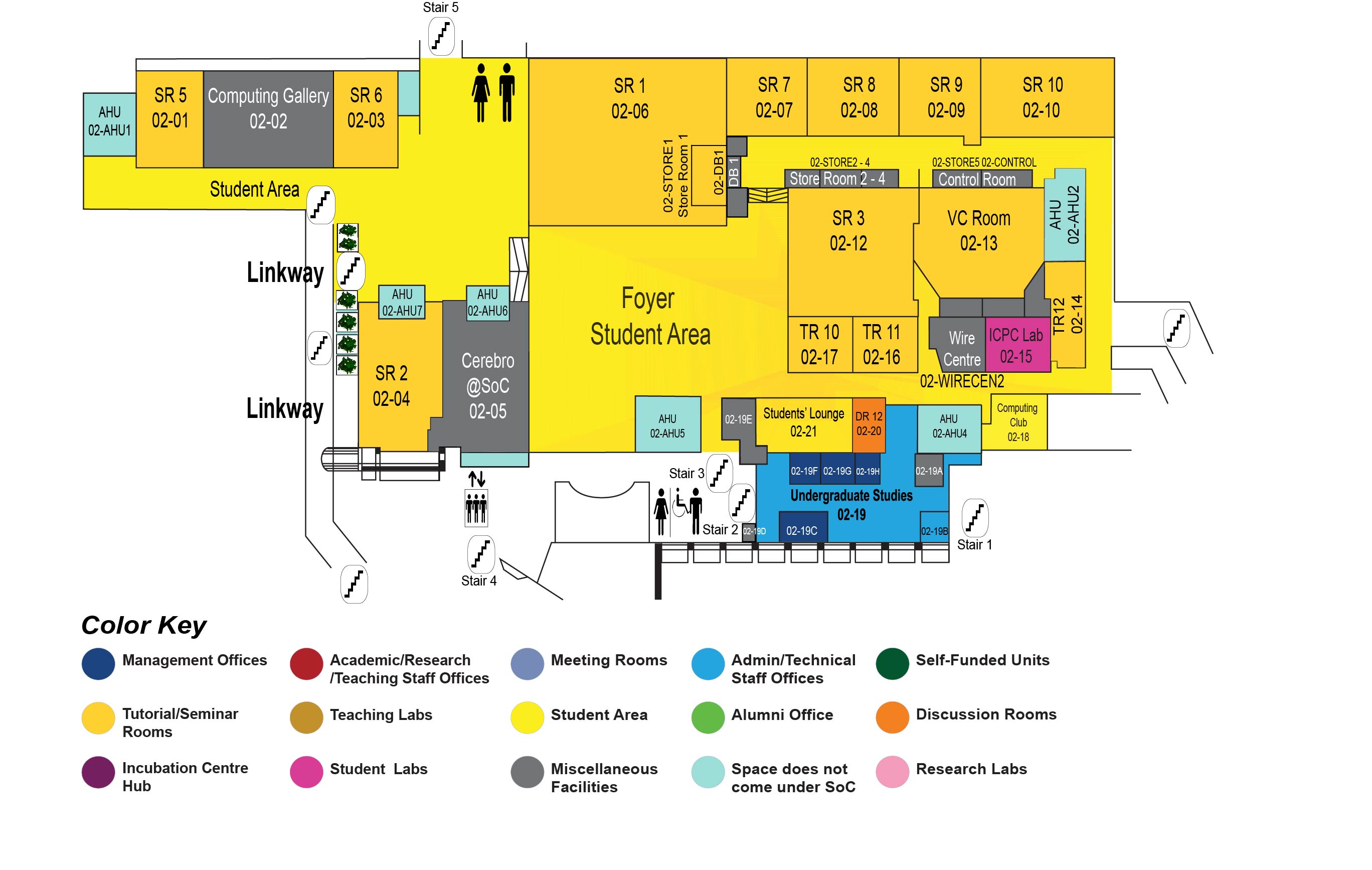

COM1 Level 2

SR2, COM1-02-04

Abstract:

This talk will shed some light on how the Computer Vision team at Facebook AI is working towards detecting adversarial media. Facebook takes the integrity of the media posted on its platform seriously and has invested significantly, and continues to invest in protecting the integrity of posted media. In particular, this talk will discuss the cutting edge research that the Computer Vision team is conducting to protect the integrity of images and videos posted on Facebook platform. Our research in Computer Vision to this end includes the detecting of manipulated media, ranging from deepfakes, GAN-generated media, to other forms of tampering, as well as inferring the intent/motives/semantics of a Facebook post. We will focus on the generalization of models in an effort to avoid bespoke models that will be broken in the face of future attack vectors.

Biodata:

Dr. Ser-Nam Lim manages Facebook AI's Computer Vision teams in the New York and Cambridge offices. His current research interests lie in the understanding of generative models, particularly in large scale generation, anomaly detection, representation learning, media manipulation and multimodal analysis. He earned his PhD in Computer Vision at the University of Maryland, College Park, in 2005. Previously, he spent a decade at GE Research, where he was manager of the Computer Vision lab, led several major company research initiatives, and was also PI of the IARPA CORE3D program. At Facebook, he is currently leading his team in an effort to detect media manipulation that has the potential to spread mis-information.