Structured Information Extraction for Scientific Documents

AS6 Level 5

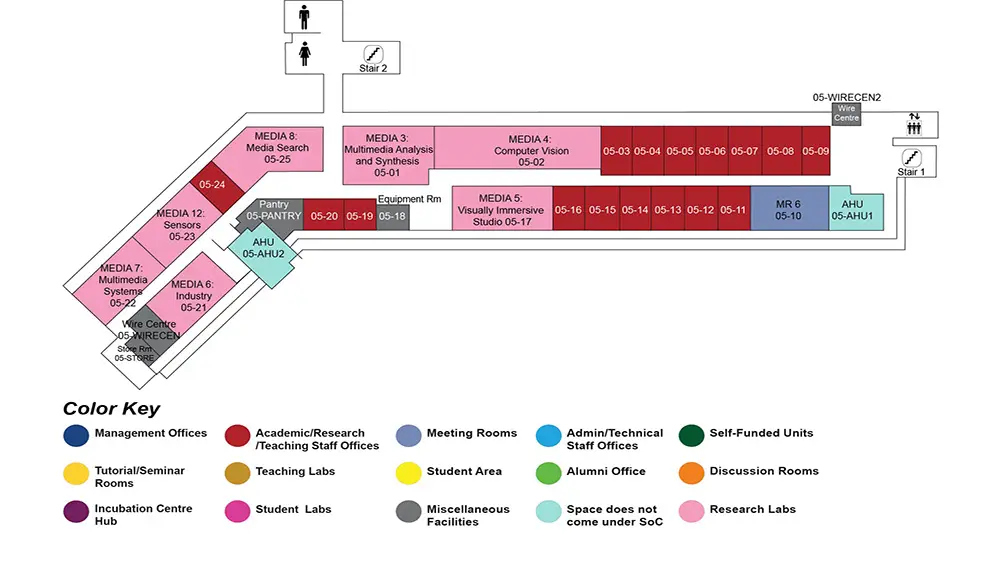

MR6, AS6-05-10

Abstract:

Information extraction on scientific documents is crucial for many downstream tasks. Recent development in deep learning has resulted into rapid replacement of statistical information retrieval based models by semantic deep neural models. Though utilizing continuous vector word space and models, which can represent complex hypothesis, have shown significant improvement on many information extraction tasks, such models fail to scale up in larger size textual context.

We propose various structured machine learning approaches to different-sized contexts as present in long documents. We experiment with tasks on scientific documents, which form different and strong use cases due to availability of structures of various complexities. We first consider the small-context case of reference string, where the context is just within the reference string itself, and gradually increase the extent of the necessary context up to keyphrase extraction, where the full document is the context. For small contexts - when modeled as sequential labeling - we show that Bidirectional Long Short-Term Memory with Conditional Random Fields outperform rich, handcrafted feature baselines. However, as the context increases to encompass a few lines, sequential model are still able to work reliably but require more resources and occasionally global features. For even larger contexts of more than a few lines or paragraphs, token-wise sequential model fail completely. For such cases, we propose graph-based deep neural networks that address these shortcomings. We imbue structured components from traditional graph ranking model such as TextRank, and fuse such information together with deep neural networks resulting in models which build upon the complex graph-based learners, while enforcing accounting for traditionally known structures.