Selective Exploration Methods for Experience Transfer in Reinforcement Learning

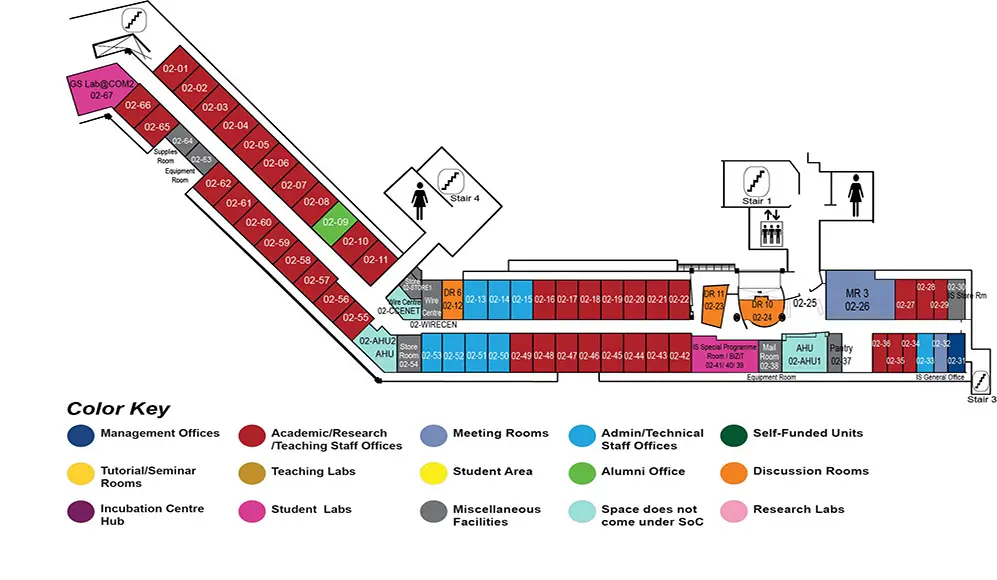

COM2 Level 2

MR3, COM2-02-26

Abstract:

Transfer learning---reusing previously learned knowledge, can speed up learning in many reinforcement learning tasks. In this work, we propose a new selective exploration framework (SEF) for experience transfer to solve problems that require fast responses adapted from incomplete, prior knowledge. We consider the setting where the source and target tasks share similar objectives, but differ in the transition dynamics; e.g., for a robotic agent operating in similar but challenging environments, such as care homes and hospital wards.

In this proposal, we focus on policy transfer. Policy reuse is effected through identifying the sub-spaces that are different in the target environment, where the source knowledge is insufficient. We present the selective exploration and policy transfer (SEAPoT) algorithm that is an instantiation of the selective exploration framework. We describe methods to construct sub-spaces for local exploration and a strategy that selectively and efficiently explores the target task. We define a task similarity metric based on the Jensen-Shannon distance between the tasks' transition-probability distributions. We demonstrate the flexibility of the proposed framework by incorporating different exploration mechanisms for learning. We demonstrate the efficacy of SEAPoT in large experiments, real world scenarios modeled using Minecraft and discrete grid world environments as test-beds, and empirically show that our method performs better in terms of jump starts and cumulative average rewards, as compared to the state-of-the-art policy reuse methods.

Going further, when the agent detects that the source and target tasks are dissimilar, knowledge reuse can be effected via policy fragments or partial policies, extracted from source task policies, that are still relevant in the target.